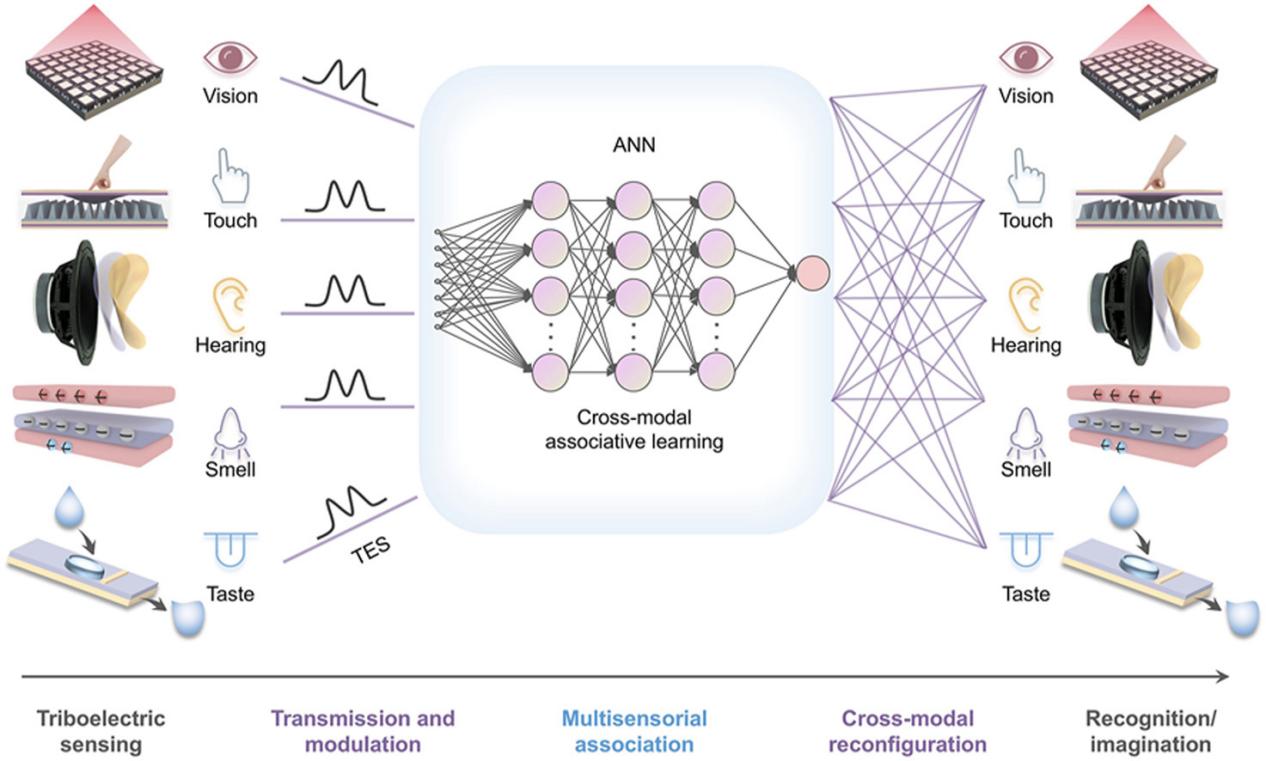

GA, UNITED STATES, May 8, 2026 /EINPresswire.com/ — Human cognition relies on the seamless integration of multiple senses, allowing the brain to associate, infer, and even imagine across modalities. Replicating this capability in artificial systems has long remained a challenge, particularly under strict energy constraints. This study presents a bioinspired multisensory framework that integrates vision, touch, hearing, smell, and taste within a self-powered architecture. By enabling cross-modal association and adaptive reconfiguration, the system allows one sensory input—such as touch or sound—to trigger corresponding representations in other sensory domains. Beyond conventional recognition, the framework demonstrates higher-level cognitive functions, including inference and generative pattern creation. These advances point toward a new generation of intelligent machines capable of human-like perception and cognition.

In humans, sensory information is not processed in isolation. Vision, touch, hearing, smell, and taste interact dynamically to form unified perceptions of the environment. This cross-modal integration enables rapid decision-making, contextual understanding, and even imagination. However, most artificial intelligence systems still rely on isolated sensory channels or energy-intensive centralized processing. Existing multisensory approaches often struggle with scalability, adaptability, and power efficiency, limiting their application in autonomous robots and embodied intelligence. Moreover, artificial systems rarely achieve true cross-modal reconfiguration, where information from one sense can be transformed into another. Based on these challenges, there is a pressing need to develop an energy-efficient multisensory framework capable of human-like cross-modal cognition.

Researchers from the Beijing Institute of Nanoenergy and Nanosystems, the University of Chinese Academy of Sciences, Guangxi University, and Georgia Institute of Technology report a bioinspired multisensory system that closely mimics how the human brain integrates information across senses. Published (DOI: 10.1016/j.esci.2025.100482) online on March 2026, in eScience, the study introduces a triboelectric-driven framework that unifies visual, tactile, auditory, olfactory, and gustatory inputs within a self-powered architecture. The system demonstrates high-accuracy cross-modal recognition and adaptive sensory reconfiguration, offering a new pathway toward energy-autonomous robotic cognition.

At the core of the framework is a triboelectric-sensing-mediated artificial neural network (TES-ANN), inspired by the distributed and hierarchical organization of human sensory neurons. Mechanical, acoustic, and material-based stimuli are converted into electrical signals through triboelectric nanogenerators, eliminating the need for external power supplies. These signals are then encoded as neural-like spikes and processed through interconnected artificial sensory modules.

The system demonstrates robust tactile-visual association: handwritten digits and letters sensed through touch are reconstructed as visual images with an accuracy of 97.12%. Similarly, auditory inputs are successfully linked to corresponding visual, olfactory, and gustatory representations, achieving 94.62% accuracy in cross-modal reconfiguration. Beyond empirical learning, the framework exhibits non-empirical cognitive behavior. For example, after learning basic associations between colors and fruits, the system can infer and generate a plausible visual representation of a previously unseen object—such as a “purple strawberry”—based solely on auditory input.

This ability to move from perception to inference and imagination marks a conceptual shift from passive multisensory fusion to active cross-modal cognition. Importantly, all these functions are achieved with high energy efficiency, addressing a key bottleneck in autonomous intelligent systems.

“This work demonstrates that artificial systems can move beyond simple sensory recognition toward genuine cognitive association and imagination,” said the study’s corresponding author. “By combining triboelectric sensing with bioinspired neural architectures, we show that energy-autonomous systems can perform complex cross-modal tasks once thought to be exclusive to biological brains. This opens new opportunities for developing intelligent machines that interact with the world in a far more natural and adaptive way.”

The proposed multisensory framework has broad implications for robotics, human–machine interfaces, and embodied artificial intelligence. Energy-autonomous robots equipped with such systems could operate for extended periods in complex environments, interpreting sensory cues more holistically and responding more intelligently. Potential applications range from assistive prosthetics and rehabilitation devices to immersive virtual-reality systems and camera-free object recognition. By enabling machines to associate, infer, and even imagine across sensory domains, this research lays the groundwork for a new generation of intelligent systems that more closely resemble human cognition, bridging the gap between perception and understanding.

DOI

10.1016/j.esci.2025.100482

Original Source URL

https://doi.org/10.1016/j.esci.2025.100482

Funding information

This work is supported by the National Key Research and Development Program of China (2023YFB3208102), the National Natural Science Foundation of China (52073031), the “Hundred Talents Program” of the Chinese Academy of Science.

Lucy Wang

BioDesign Research

email us here

Legal Disclaimer:

EIN Presswire provides this news content “as is” without warranty of any kind. We do not accept any responsibility or liability

for the accuracy, content, images, videos, licenses, completeness, legality, or reliability of the information contained in this

article. If you have any complaints or copyright issues related to this article, kindly contact the author above.

![]()

Media gallery